Multithreaded HTTP Proxy Server

A high-performance proxy server with concurrent request handling, LRU caching, and advanced thread synchronization.

(What this project does)

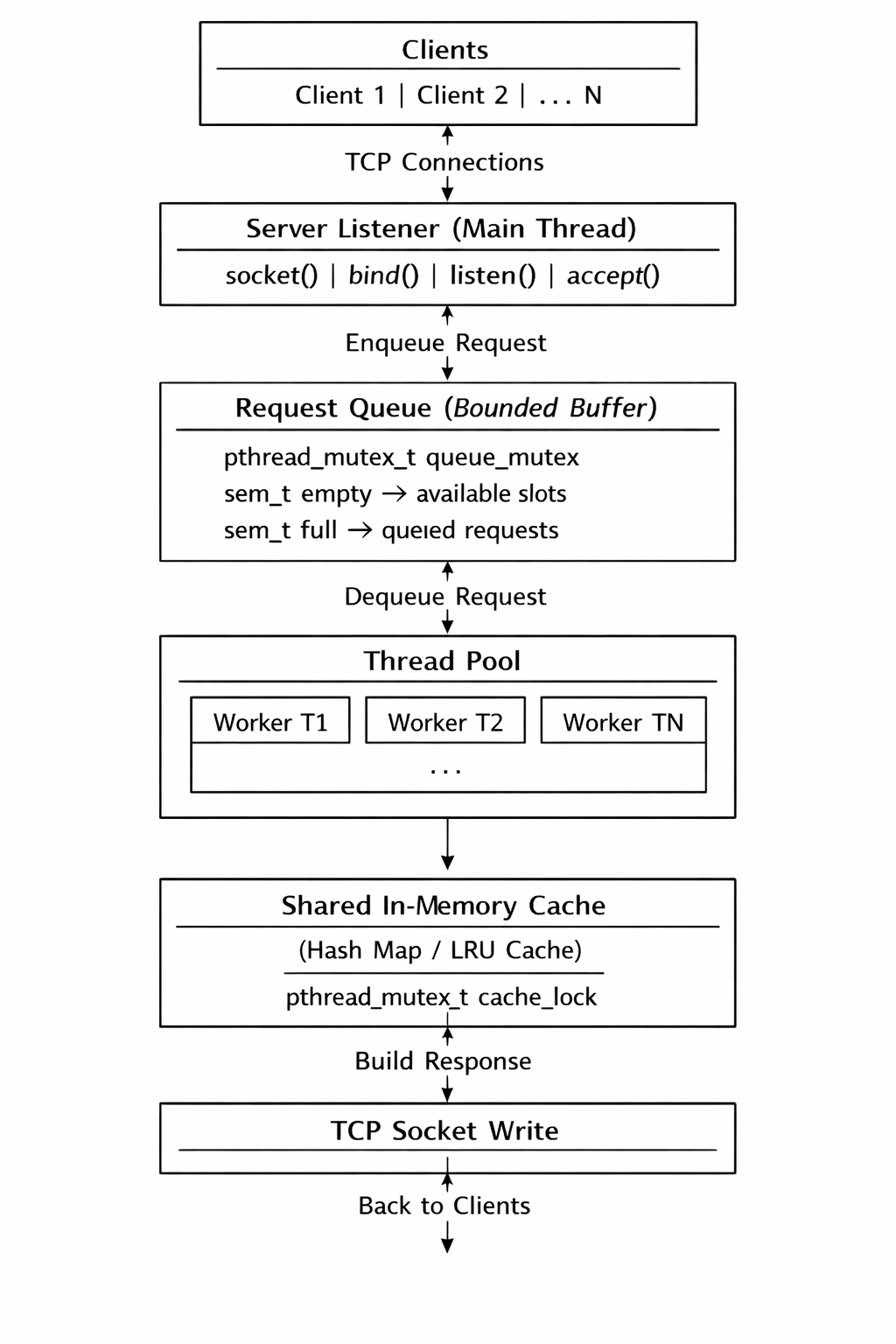

- Accepts multiple client connections over TCP

- Uses a thread pool to handle concurrent requests

- Implements a bounded request queue using semaphores

- Forwards HTTP requests to the target server on cache miss

- Stores responses in a shared LRU cache

- Serves cached responses directly on cache hit

- Ensures thread safety using mutexes and semaphores

(Why this project)

Most backend systems hide concurrency behind frameworks. This project intentionally avoids abstractions and works directly with:

It helped understand how concurrency, synchronization, and caching affect performance and correctness in real servers.

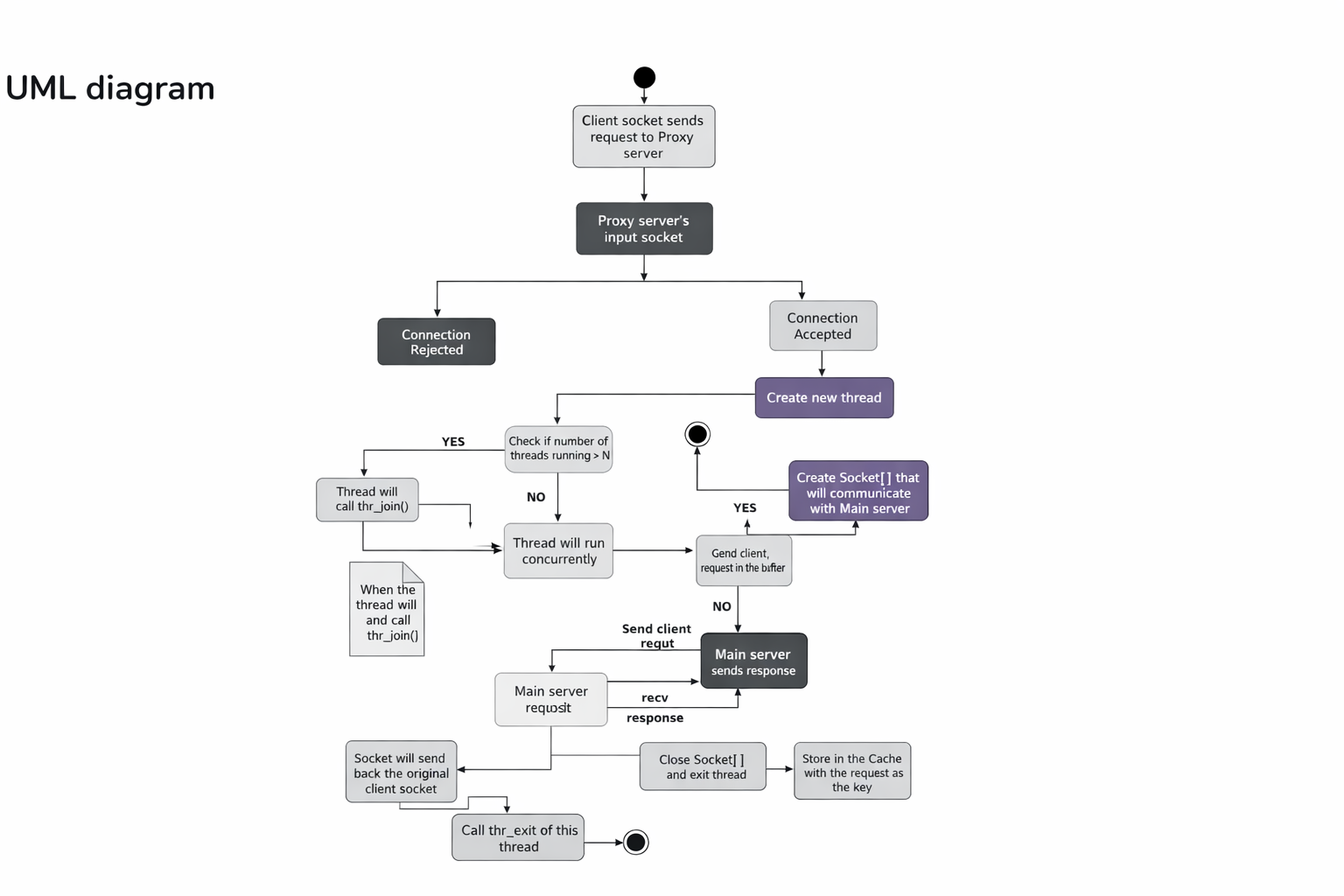

(High-level architecture)

(Request handling flow)

(Synchronization primitives)

pthread_mutex_t

Protects shared data structures like the request queue and cache

sem_t empty

Tracks available slots in the request queue

sem_t full

Tracks the number of pending requests

This design prevents race conditions, busy waiting, and unbounded thread creation

(Why data is handled in chunks)

HTTP responses are received over TCP, which is a stream-based protocol. There is no guarantee that the entire response will arrive in a single read.

Reading data in chunks:

- Ensures correctness for partial reads

- Avoids large memory allocations

- Supports responses of unknown or large size

- Allows streaming data directly to the client

This is how real proxy servers forward data efficiently.

(Cache design - LRU)

- The cache is shared across all worker threads

- Each entry maps a request URL to its response

- On every cache hit, the access time is updated

- When the cache reaches capacity, the least recently used entry is evicted

- All cache operations are protected using a mutex

This significantly reduces response time for repeated requests.

(Performance results)

Cache latency comparison

This shows a large improvement when responses are served directly from memory.

Load testing

The proxy was tested using ApacheBench:

The server remained stable under concurrent load.